The healthcare industry has entered a transformative era. Agentic artificial intelligence — systems capable of autonomous reasoning, planning, and multi-step task execution with defined human oversight — is transitioning from research concept to enterprise deployment. Unlike traditional machine learning that excels at narrow pattern recognition or generative AI that produces content reactively, agentic AI operates with goal-directed autonomy: decomposing complex objectives, coordinating specialized agents across disparate systems, and adapting strategies based on outcomes. This paradigm shift addresses healthcare’s most persistent challenges, including administrative burden consuming 20 percent of institutional budgets, physician burnout from documentation requirements, and the growing complexity of clinical decision-making.1

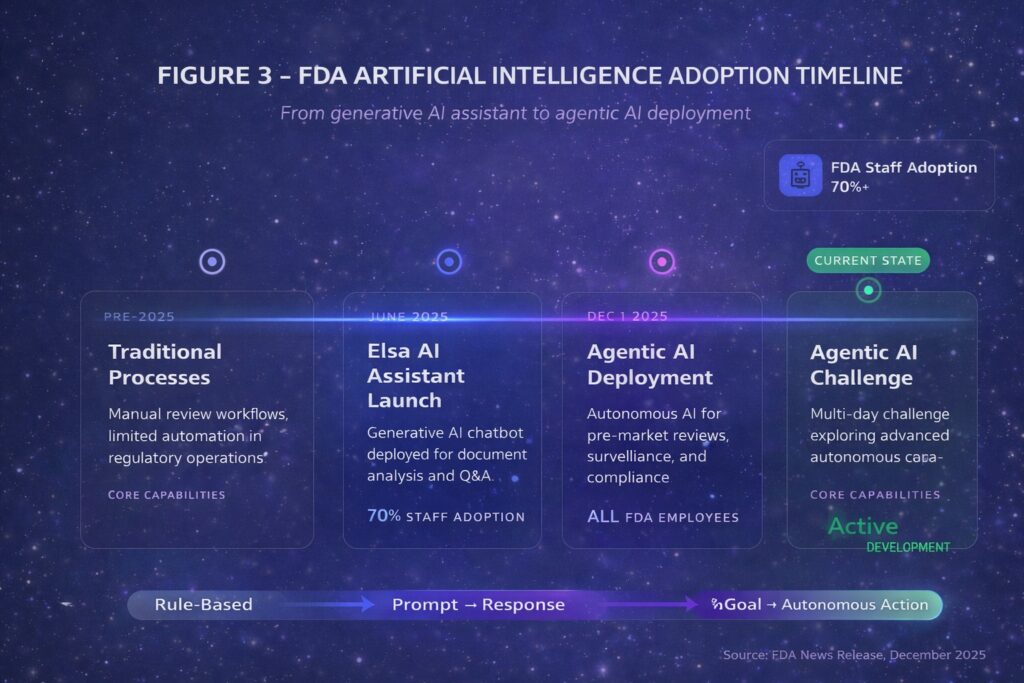

The acceleration in late 2025 has been remarkable. On December 1, 2025, the U.S. Food and Drug Administration announced agentic AI deployment for all agency employees — the first major regulatory body to institutionalize autonomous AI workflows for administrative functions including meeting management, document processing, and compliance operations.2 Critically, these internal tools support agency operations but do not autonomously render pre-market review decisions — human reviewers retain accountability for regulatory determinations. Days later, the Department of Health and Human Services released its comprehensive AI strategy positioning autonomous systems as central to federal health operations.3 For clinicians, administrators, patients, and healthcare entrepreneurs, understanding this transformation has become essential.

Defining the Agentic Paradigm

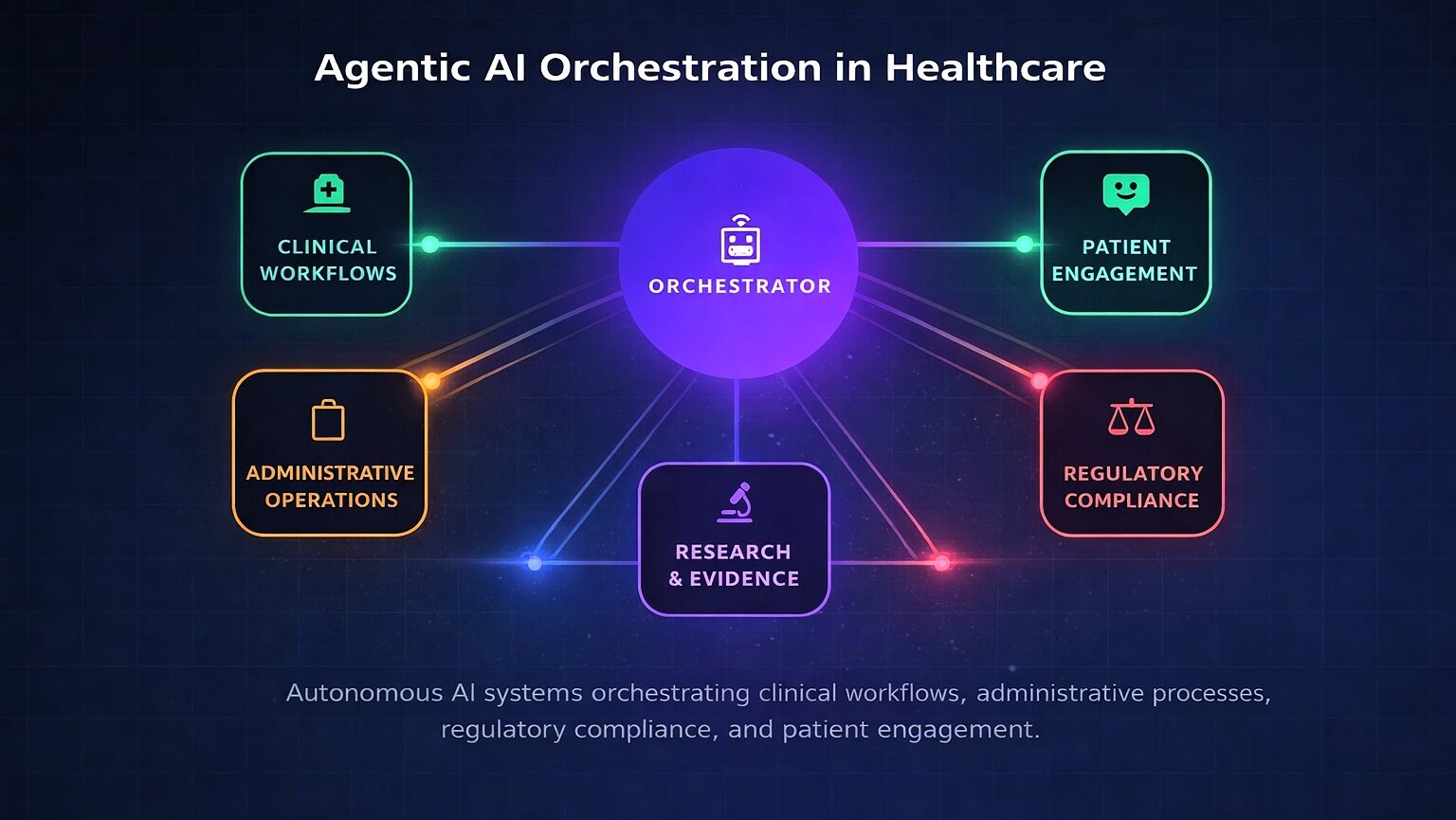

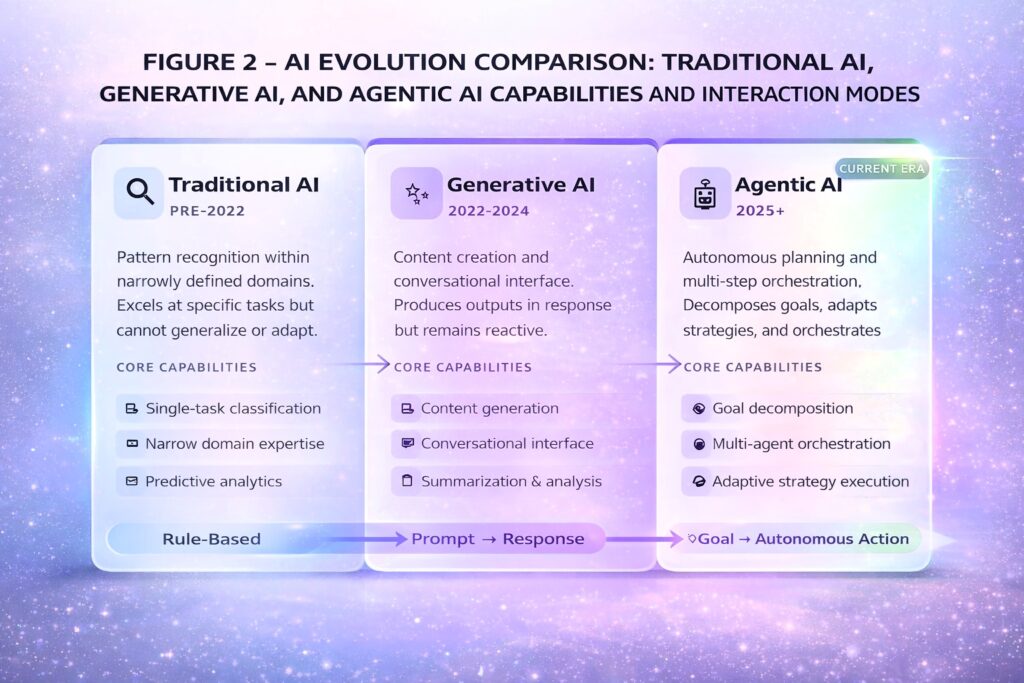

The distinction between agentic AI and its predecessors is substantive. Traditional machine learning excels at classification within narrow domains — identifying pathological findings in radiological images or predicting sepsis risk. Generative AI expanded to content creation and conversational interaction but remains fundamentally reactive. Agentic AI introduces systems that pursue defined goals with limited supervision, typically employing multiple specialized agents coordinated through an orchestration layer.4

Fig. 2

Technical precision requires distinguishing workflow automation from true goal-directed autonomy. Current enterprise deployments — Epic’s named agents, Microsoft’s orchestration framework — primarily automate predefined multi-step workflows with human checkpoints. Emerging research systems demonstrate more sophisticated autonomous reasoning, but these remain largely experimental. Research published in Frontiers in Artificial Intelligence found that agentic architectures can reduce cognitive workload by up to 52 percent compared to traditional clinical decision support, though this finding emerged from controlled simulation environments rather than clinical deployment.1 Healthcare leaders should maintain appropriate skepticism about vendor claims until validated outcome data emerges.

The enterprise technology landscape

The market is consolidating around major platforms. Microsoft’s healthcare agent orchestrator, unveiled at Build 2025, provides pre-configured agents with multi-agent orchestration for enterprise deployment, with pilot implementations at Stanford Medicine and Oxford University Hospitals.5 Epic Systems, serving approximately 38 percent of U.S. inpatient facilities with 325 million patient records, has deployed multiple AI agents: Emmie for patient engagement through MyChart, Art for provider communications, and Penny for revenue cycle management. However, access to these capabilities depends on Epic version, module licensing, and organizational readiness — many customers remain on versions predating agent functionality, and 6-12 months of configuration effort should be expected before realizing full value.6

Epic’s Cosmos AI foundation model initiative, trained on data from 275 million patients, may ultimately prove more transformative than individual named agents — representing a data advantage that competitors and startups cannot easily replicate. Nuance’s Dragon Copilot extends ambient documentation to nursing workflows, while the Atropos Evidence Agent proactively surfaces real-world evidence during clinical encounters without requiring explicit queries.7 For the 62 percent of hospitals not on Epic, integration complexity increases substantially, and Microsoft’s platform may offer broader interoperability.

Table 1: Healthcare Agentic AI Platform Comparison (January 2026)

| Platform | Capabilities | Deployment Considerations |

| Microsoft Healthcare Agent Orchestrator | Multi-agent orchestration, clinical trial matching, tumor board preparation | Azure AI Foundry; broader EHR interoperability; piloting at academic centers |

| Epic (Emmie, Art, Penny, Cosmos) | Patient engagement, provider communications, revenue cycle, foundation model | Version-dependent; 6-12-month configuration; strongest for existing Epic customers |

| Nuance Dragon Copilot | Ambient clinical and nursing documentation, clinical query response | Generally available; multi-EHR integration; requires workflow design |

| Atropos Evidence Agent | Proactive real-world evidence delivery at point of care | Pilot deployments; addresses evidence accessibility gap |

Regulatory landscape and liability considerations

The FDA’s December 2025 agentic AI deployment requires careful interpretation. The agency’s autonomous systems support administrative functions within a secure GovCloud environment that does not train on industry submissions. This builds on the June 2025 launch of Elsa, a generative AI assistant now used by over 70 percent of FDA staff.8 The FDA’s database lists over 1,250 AI-enabled medical devices authorized for marketing, but the vast majority are narrow diagnostic imaging tools — not autonomous clinical agents. True agentic systems for clinical decision-making face the most stringent Class III regulatory pathway.9

Fig. 3

International regulatory coordination is advancing. The European Medicines Agency and FDA jointly issued AI guidance for drug development, while EU Medical Device Regulation Article 14 mandates human oversight for high-risk AI systems — requirements that will significantly constrain autonomous deployment in European markets.10 State-level fragmentation compounds complexity: Illinois prohibits AI from making independent therapeutic decisions effective August 2025, Delaware is establishing an agentic AI regulatory sandbox, and Florida requires 24-hour written consent before AI records therapy sessions.11

The liability landscape presents novel challenges. The learned intermediary doctrine traditionally shields device manufacturers when physicians exercise independent judgment — but agentic systems that execute autonomously may eliminate the “learned intermediary”, exposing manufacturers to direct liability. When multi-step autonomous workflows result in patient harm, accountability allocation across vendors, health systems, and supervising clinicians involves unsettled legal questions. Organizations should demand clear contractual liability allocation and appropriate indemnification provisions before deployment.12

Patient safety and the automation challenge

Autonomous systems introduce safety considerations distinct from traditional clinical decision support. The patient safety literature documents automation complacency — the well-established tendency for humans to over-trust automated systems and fail to catch errors, a risk that increases with autonomy.13 Agentic systems may fail silently, with errors propagating through multi-step workflows before becoming clinically apparent. Healthcare organizations must conduct prospective hazard analysis before deployment, identifying anticipated failure modes and establishing detection mechanisms.

Current adverse event reporting infrastructure was designed for human and device errors, not algorithmic failures. Health systems deploying agentic AI should establish dedicated mechanisms for identifying, reporting, and analyzing AI-related safety events — including near-misses that existing frameworks may not capture. Governance architecture must include decision audit trails documenting agent actions, intervention protocols for human override, and continuous monitoring for emergent behaviors not apparent during validation.

The patient perspective deserves explicit attention. Early research on patient acceptance of AI-mediated care suggests significant variation based on task type, transparency, and perceived physician oversight. Patients generally accept AI for administrative functions and diagnostic support but express reservations about autonomous treatment decisions.14 Trust-building requires clear communication about when and how AI participates in care — something current disclosure frameworks inadequately address.

Workforce transformation and economic realities

Administrative tasks consume approximately 20 percent of healthcare institutional budgets, while physicians spend 13 percent of their time on similar responsibilities. Agentic AI targets precisely these activities.1 However, whether autonomous systems will substitute for clinical labour or complement it remains an open question with profound implications for workforce planning, medical education, and specialty choice. Research from Johns Hopkins identified a “competence penalty” whereby physicians using AI are perceived as less capable by peers and patients — creating adoption barriers even when AI demonstrably improves outcomes.15

Nursing workflows require particular attention. Nurses represent the largest clinical workforce and have distinct concerns about ambient AI documentation — including accuracy of captured information, workflow disruption during patient encounters, and implications for professional judgment. Dragon Copilot’s nursing extension requires careful workflow design and should not be deployed without nursing informatics involvement.

Economic analysis demands rigour beyond vendor projections. Implementation costs — including software licensing, integration, training, workflow redesign, governance infrastructure, and ongoing maintenance — are substantial. The productivity paradox, wherein IT investments historically fail to improve measured healthcare productivity, warrants appropriate skepticism.16 Reimbursement implications remain unclear: will payers reimburse for AI-augmented care, or will they demand discounts based on presumed efficiency gains? These dynamics will significantly influence adoption economics.

Strategic framework for healthcare organizations

Healthcare leaders face a fundamental build, buy, or partner decision. Organizations with substantial technical capacity and differentiated use cases may justify custom development. Most will purchase vendor solutions, with the critical choice being platform selection based on existing EHR infrastructure and integration requirements. Partnership models — embedding vendor capabilities within organizational workflows through co-development arrangements — offer middle-ground approaches.

Market trajectory data reinforces strategic urgency. While less than one percent of enterprise software incorporated agentic AI in 2024, Gartner projects 33 percent adoption by 2028, with the global market reaching $200 billion by 2034.17 Platform consolidation around Microsoft and Epic creates challenges for startups, though opportunities remain in vertical specialization, underserved care settings, and geographic niches where incumbents lack focus. Organizations developing institutional competencies now will establish advantages that later entrants cannot easily replicate.

Table 2: Implementation Prioritization Framework

| Phase | Use Cases | Risk Profile | Timeline |

| Immediate (2026) | Scheduling, prior authorization, patient messaging triage | Low clinical risk; high administrative burden reduction | 3-6 months |

| Near-term (2026- 2027) | Ambient documentation, coding assistance, clinical summaries | Moderate risk; clinician review required | 6-12 months |

| Medium-term (2027-2028) | Clinical decision support, care coordination | Higher risk; robust governance essential | 12-24 months |

| Longer-term (2028+) | Autonomous monitoring, closed-loop systems | Highest risk; regulatory clarity required | 24+ months |

Strategic implications

The trajectory points beyond workflow efficiency toward fundamental care model transformation. When autonomous systems can conduct comprehensive health assessments in patients’ homes, when diagnostic capabilities requiring tertiary centres become available at community pharmacies, the very concept of a “medical visit” will evolve. Geographic distribution implications deserve attention: agentic AI may extend specialist reach to underserved areas where entire countries have only a handful of imaging experts, potentially democratizing access to clinical expertise.18

For healthcare leaders, entrepreneurs, and clinicians, the imperative is clear. Agentic AI is not a speculative future but an operational present reshaping regulatory process, clinical workflows, patient expectations, and competitive dynamics. Organizations that develop competencies in autonomous system governance, workforce adaptation, safety monitoring, and strategic deployment will capture the efficiency gains and quality improvements these technologies enable while managing the substantial risks they introduce. The transformation is underway. The strategic question is no longer whether to engage but how to lead — safely, ethically, and with appropriate humility about what remains unknown.

References

- Hinostroza Fuentes N, Karim S, Tan L, AlDahoul N. AI with agency: A vision for adaptive, efficient, and ethical healthcare. Frontiers in Artificial Intelligence. 2025;8.

- U.S. Food and Drug Administration. FDA Expands Artificial Intelligence Capabilities with Agentic AI Deployment. FDA News Release. December 1, 2025.

- U.S. Department of Health and Human Services. HHS Artificial Intelligence Strategy. December 4, 2025.

- IBM. What is Agentic AI? IBM Technology Documentation. 2025.

- Microsoft. Healthcare Agent Orchestrator. Microsoft Build 2025 Announcement. May 2025.

- Healthcare IT News. Epic unveils AI agents, showcases new foundational models at UGM 2025. 2025.

- Microsoft Industry Blog. Agentic AI: Shaping the future of healthcare innovation. November 18, 2025.

- Parenteral Drug Association. News Brief: FDA Expands AI with Agentic Deployment. December 2025.

- Bipartisan Policy Center. FDA Oversight: Understanding the Regulation of Health AI Tools. November 10, 2025.

- European Pharmaceutical Review. EMA and FDA issue joint AI guidance for medicine development. January 2026.

- Manatt, Phelps & Phillips, LLP. Health AI Policy Tracker. 2025.

- Price WN, Gerke S, Cohen IG. Potential liability for physicians using artificial intelligence. JAMA. 2019;322(18):1765-1766.

- Parasuraman R, Manzey DH. Complacency and bias in human use of automation. Human Factors. 2010;52(3):381-410.

- Longoni C, Bonezzi A, Morewedge CK. Resistance to medical artificial intelligence. Journal of Consumer Research. 2019;46(4):629-650.

- Medical Economics. Physicians who use AI face a ‘competence penalty,’ Johns Hopkins study finds. 2025.

- Himmelstein DU, Jun M, Busse R, et al. A comparison of hospital administrative costs in eight nations. Health Affairs. 2014;33(9):1586-1594.

- Gartner. Top Strategic Technology Trends for 2025: Agentic AI. 2025.

- Microsoft Research. The AI Revolution in Medicine, Revisited. Microsoft Research Podcast. July 23, 2025.

About the author

Dr. Srikanth Mahankali is a recognized authority in medical AI implementation and policy. As Chief Executive Officer of Shree Advisory & Consulting and a member of the NSF/MITRE AI Workforce Machine Learning Working Group, he has contributed to the development of national AI strategy while advancing responsible innovation in healthcare technology.