Artificial intelligence has entered dentistry not with fanfare, but through a quiet transformation. In practices across North America, clinicians are discovering that AI scribes can record consultation notes while they focus on patients, enhancing compassionate care. Diagnostic algorithms can identify subtle pathologies in radiographs, and machine learning-powered administrative assistants can simplify insurance authorizations. What once seemed like science fiction has now become a practical reality.

Yet beneath this transformation lies a critical distinction that determines safety, compliance, and professional liability: the difference between AI foundation models and the specialized dental applications built upon them. This distinction is where risk assessment and regulatory oversight actually operate, and where dental professionals must focus their attention.

This review offers a practical framework for navigating this rapidly changing landscape. Since dental AI evolves as quickly as the oral microbiome itself, we intend to update this analysis yearly to reflect new developments, emerging applications, and changing safeguards.

Frontier models vs. dental AI applications

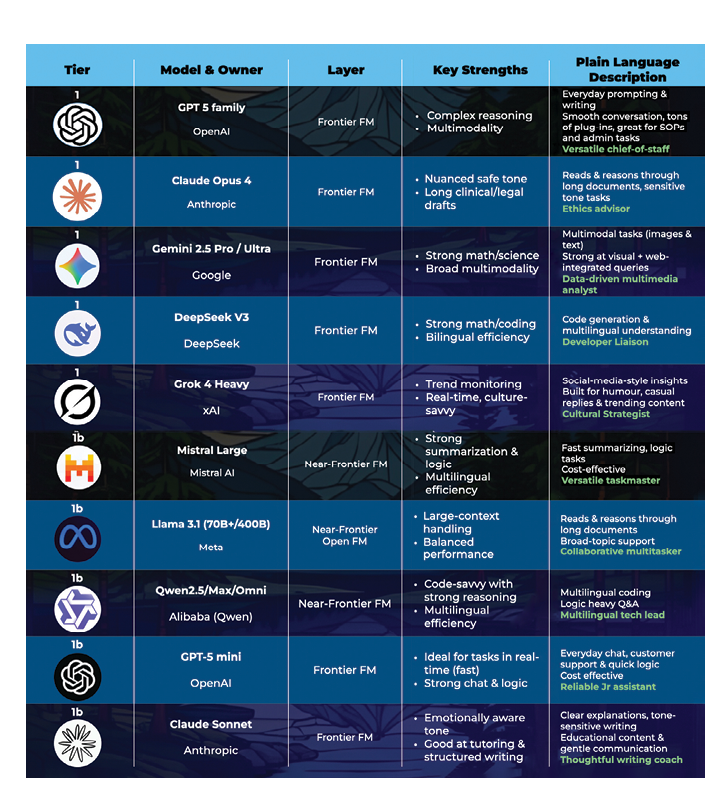

Frontier models such as GPT-5, Claude, and Gemini are powerful computational engines that excel at reasoning, language generation, and multimodal interpretation. They can draft text, analyze images, and synthesize information across diverse domains. However, they are not designed for healthcare use. They lack the privacy safeguards, audit trails, and regulatory compliance features required for clinical deployment. Most importantly, they cannot safely manage personal health information.

The real change occurs at the application level. Dental AI applications are built on top of foundational models, incorporating safeguards such as Personal Information Protection and Electronic Documents Act (PIPEDA) compliant data handling, encryption, audit trails, and domain-specific validation. Examples include Diagnocat’s radiographic analysis and Scribeberry’s clinical documentation.

This architectural distinction is vital because it determines where risks surface and how they must be managed. The same foundation model that powers a consumer chatbot can also support a secure dental scribe, but these applications have fundamentally different risk profiles based on their data governance, clinical integration, and regulatory compliance.

Related article: Shifting Risks in Dental Radiography Using Artificial Intelligence

Understanding this separation clarifies why dental professionals cannot simply ask whether “AI” is safe. The question must always be whether a specific application is safe for its intended clinical use, given the safeguards for the data and decisions involved.

Adoption in dentistry remains in its early phases, with practices testing out scribes, image-assisted diagnostics, and patient communication tools that develop quickly, sometimes within months. By the time this review is published, some examples mentioned here may already seem outdated, replaced by faster and more secure alternatives. This rapid pace of change highlights why frameworks for risk assessment are more important than just listing current tools.

Table 1: Frontier and near-frontier models overview tables

Why AI matters for dental professionals

For dental professionals, the significance of AI is not just theoretical; it is practical. Much of its potential lies in improving efficiency. Documentation and administrative tasks take up a disproportionate amount of clinical time, often at the expense of patient care. AI scribes and assistants can help free up this time by decreasing hours spent on charting, referral letters, and insurance documentation. A recent systematic review found that AI scribes enhance workflow efficiency, boost clinician engagement, and lessen the documentation burden, although their overall effect on burnout remains mixed.1,2

Beyond time, AI also offers the potential for greater accuracy and consistency. A recent systematic review shows that AI tools are advancing across diagnostic, administrative, and educational workflows in dentistry, with proven improvements in accuracy and efficiency.3 Radiographic analysis platforms can detect subtle pathologies with higher sensitivity, allowing patients earlier diagnoses and more timely interventions. Clinical decision support tools incorporate evidence-based guidelines at the point of care, helping providers explain treatment options clearly and confidently. Collectively, these applications can assist in standardizing record-keeping and clinical reasoning among providers, but also improve the patient experience.

These benefits, however, come with a warning. As AI tools become more embedded in daily workflows, there is increasing evidence that overreliance can decrease, rather than improve, clinical vigilance. Artificial intelligence can boost accuracy and efficiency, but it also risks weakening clinician skills if relied on too heavily. A recent multicentre study in colonoscopy found that endoscopists who routinely used AI for polyp detection experienced a noticeable decline in their own adenoma detection rates when the AI was turned off.4 In other words, once the safety net was removed, clinical performance declined.

The same concern exists in dentistry. If practitioners get used to AI flagging caries, assessing bone loss, or drafting periodontal charts, their vigilance might slowly decline. Over time, the interpretive and diagnostic skills that underpin clinical judgment could weaken if they are not regularly practiced.

This creates a significant responsibility for dental schools and continuing education providers. Future curricula will need to ensure that students and practising clinicians learn to use AI confidently without relying on it excessively. Simulation training, deliberate practice without AI assistance, and structured validation exercises may all be necessary to maintain core competencies.

Those who achieve this balance will be best placed to harness AI’s benefits without undermining their own expertise or patient safety.

Regulatory frameworks and risk categories

Not all AI applications pose the same level of risk. The EU AI Act offers one of the clearest structures for categorization, establishing a tiered framework where regulatory obligations increase with the potential impact on health, safety, and human rights.

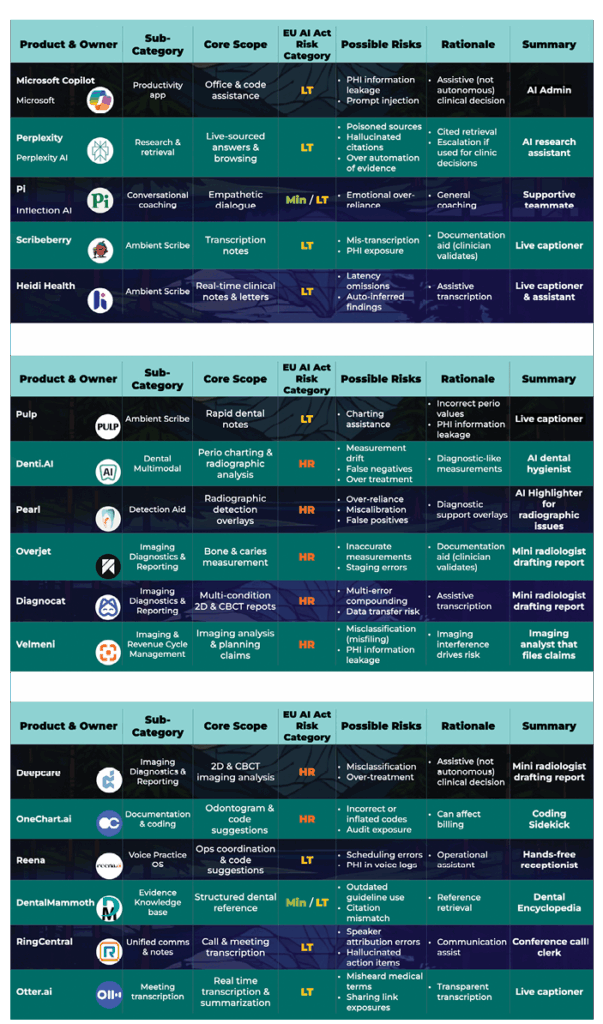

High-risk systems include diagnostic aids and radiographic overlays, where errors could directly impact treatment outcomes. These applications demand strict oversight, documented validation, and continuous monitoring to ensure safety. Limited-risk systems encompass many frontier and near-frontier tools when used in supportive roles such as staff training or drafting patient communications. In these settings, errors are manageable, and the user bears full responsibility for outputs.

In dentistry, the context of application is crucial. An AI-powered radiograph-triaging tool would be classified as high risk because errors could affect diagnostic accuracy and treatment planning. A chart-drafting assistant may be considered limited risk if it functions within a secure, closed system, but the same tool could be categorized as higher risk if patient information is shared on open, externally hosted platforms. This emphasizes why data governance and system design are just as important as the underlying model.

Although Canada is not governed by the EU AI Act, the framework offers a useful perspective for dental professionals. Canadian clinicians must also adhere to the Personal Health Information Protection Act (PHIPA) and prepare for the proposed Artificial Intelligence and Data Act (AIDA), which intends to regulate high-impact AI systems, though its definitions and enforcement mechanisms are still incomplete. The Royal College of Dental Surgeons of Ontario (RCDSO) has issued important draft guidance emphasizing that dentists remain professionally responsible for all clinical decisions, whether or not AI tools are used.5

In the United States, the Health Insurance Portability and Accountability Act (HIPAA) governs patient data, while the Food and Drug Administration (FDA) regulates AI-enabled medical devices such as diagnostic imaging platforms. U.S. dental boards have yet to issue AI-specific guidance, but as in Canada, clinicians remain fully responsible for treatment outcomes.

Taken together, these frameworks demonstrate a shared principle: while AI can support documentation, diagnostics, or patient communication, the ultimate responsibility for patient care lies with the practitioner.

An analogy: The power grid of AI

Frontier and near-frontier AI models such as GPT-5, Claude, and Gemini can be likened to power stations. They generate the generative capacity that drives dental applications. By themselves, these models are neutral: they produce outputs but have no impact on patients or practices until that capacity is harnessed for specific uses.

Most of the time, this capacity is protected by infrastructure such as transformers, substations, and circuit breakers in electrical terms, or middleware, APIs, and vendor platforms in AI terms. These layers add compliance features, audit trails, and usage controls before the AI reaches dental applications. Just as electrical grids rely on safety systems, AI deployments require safeguards such as human oversight, validation protocols, and secure data governance.

Dentists can also access foundation models directly via web browsers. The AI outputs are the same, but the protective infrastructure varies greatly. Using them directly lacks healthcare-specific safeguards such as audit trails, PHI protection, and validation workflows. This introduces compliance risks under privacy laws and professional liability issues, even though the underlying AI model stays the same. The risk comes not from the model itself, but from the lack of safeguards that make it suitable for clinical use.

Once electricity arrives at a device, the outcomes vary depending on the application.

Minimal risk: The status light

A flickering indicator light carries almost no consequence. In dentistry, this is like AI used for drafting newsletters or patient education handouts. Errors are generally inconsequential and have no bearing on treatment outcomes. At most, they may cause mild inconvenience or require minor corrections, but they do not compromise patient safety.

Limited risk: The thermostat

If a thermostat fails, comfort is disrupted but safety remains intact. In dentistry, this is similar to AI scribes or administrative assistants such as Scribeberry or Reena.ai. Errors may frustrate staff or patients, but with human oversight, they do not impact treatment outcomes. The main cost is inefficiency or a loss of trust in administrative workflows, not clinical harm.

High risk: The hospital ventilator

Failure of a ventilator has immediate and serious consequences. In dentistry, this parallels diagnostic applications such as Diagnocat, Pearl, or Overjet that interpret radiographs or CBCT scans. These tools extend capability, but if clinicians rely uncritically on their outputs, errors may cascade into delayed diagnoses or inappropriate treatment. For this reason, they require systematic validation, continuous monitoring, and explicit regulatory safeguards to remain safe in practice.

Unacceptable risk: The electric chair

Electricity can also be used to cause harm intentionally, as in the electric chair. In dentistry, this is similar to AI applications that are designed or marketed in ways that bypass professional safeguards and mislead patients. An example is a consumer-facing app that promotes self-diagnosis of oral cancer lesions without clinical supervision. By design, such systems compromise safety, weaken public trust, and breach core ethical standards. Under the EU AI Act, they clearly fall into the unacceptable risk category because they not only put patients at risk but also threaten the integrity of the profession itself.

The lesson is clear. The same power plants that reliably supply lights, thermostats, and ventilators can also power an electric chair. Similarly, the same frontier models that support scribes, scheduling tools, and diagnostic platforms can also enable applications that pose unacceptable risks. The key lies in context: not “Is the model safe?” but “Is this specific use safe in my practice, with the safeguards and oversight I have in place?”

Table 2: Dental AI applications with possible EU AI Act Risk Categories

This highlights why data governance is crucial. If the system is closed, encrypted, and does not reuse patient data for training, risks are reduced, and the application presents a lower risk. If it is open, externally connected, or opaque in how it manages information, the same function might be subject to high risk obligations.

Recognizing these categories is critical for responsible adoption. It allows practitioners to embrace AI where risks are minimal and productivity gains are high, while applying stricter validation and compliance processes to applications that directly influence patient care and safety. While regulatory frameworks like the EU AI Act provide a useful lens for assessing AI risk, dentists must also confront practical barriers that determine whether these systems can be implemented effectively in daily practice.

Challenges ahead: Barriers to responsible AI adoption

While the potential of dental AI is clear, several barriers may limit adoption in the near term. Cost and integration remain significant challenges, particularly for smaller practices that lack the infrastructure to implement advanced tools or the resources to train staff. Liability and insurance coverage also remain unsettled when errors occur in AI-assisted workflows, leaving clinicians uncertain about who bears responsibility for adverse outcomes. The digital divide poses another concern, as large dental service organizations can adopt AI rapidly while independent practices struggle to keep pace. Recent reviews confirm that many AI applications validated in research settings still face steep barriers when deployed in day-to-day practice, underscoring the need for implementation research before widespread adoption.7

Beyond these operational barriers, AI adoption also prompts broader questions of equity, especially regarding which patients and practices will access its benefits.

Health equity and access

As AI becomes more integrated into dentistry, questions of equity must be addressed. Advanced systems are often expensive to purchase and maintain, which means that large dental service organizations and well-resourced practices are the first to adopt them. Smaller independent practices and community-based providers may be left behind. If access to AI is uneven, patients in underserved regions risk missing out on earlier diagnoses, streamlined care, and the efficiencies these tools can provide.

To avoid widening disparities, safeguards and funding models will be needed to support adoption in smaller practices. Equally important is ensuring that dental schools and continuing education programs provide training on AI use for all clinicians, not just those with access to the latest platforms. Only by making these tools broadly available and properly taught can AI strengthen, rather than fragment, the overall delivery of dental care.

These considerations highlight why the AI-powered ecosystem must be developed not only for innovation but also for inclusion, ensuring that improvements in efficiency and accuracy extend to every part of the profession. Without deliberate safeguards, AI could worsen inequities; ensuring fair access must be as central as ensuring accuracy.

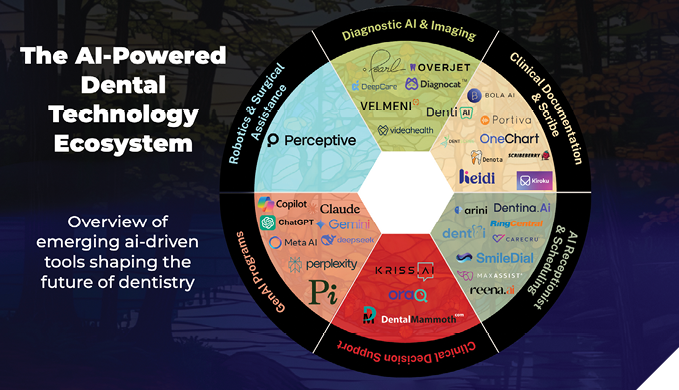

The AI-powered dental ecosystem

While frontier and near-frontier models lay the groundwork, their influence becomes evident only when they are utilized to power specialized dental applications. As this ecosystem wheel shows, AI-driven tools are emerging across nearly every dental discipline, going well beyond general practice (Figure 1).

Fig. 1

Diagnostic AI & imaging

Companies like Diagnocat, Pearl, Overjet, DeepCare, Velmeni are at the forefront of radiographic interpretation. Their tools identify bone levels and decay, flag overhangs and incomplete endodontic treatment. Some even produce draft reports from CBCT scans. These applications aim to standardize findings and reduce oversight fatigue for clinicians, while still requiring professional validation. They do not, however, replace clinical expertise.

Clinical documentation & scribe

Ambient scribe solutions like Scribeberry and Heidi automatically capture conversations and convert them into structured notes, referral letters, or chart entries. By reducing the time spent on documentation, they enable clinicians to focus more on patient care. The main challenge remains ensuring transcription accuracy and safeguarding patient privacy.

AI receptionist & scheduling

Administrative assistants like Reena.ai, DentAI, SmileDial, and Dentina.AI simplify appointment scheduling, patient reminders, and office communication. These tools serve as “hands-free receptionists,” reducing workload and potentially minimizing scheduling errors.

Clinical decision support

Platforms like OraQ, Kriss.ai, and DentalMammoth utilize AI to offer evidence-based treatment suggestions, incorporate guidelines, and aid clinician decision-making. While these tools show great potential, they must be thoroughly validated to prevent bias or outdated information from being incorporated into treatment plans.

Why this matters across all domains of dentistry

The reach of AI in dentistry spans every specialty, with applications tailored to distinct clinical needs (Figure 1). Orthodontics is adopting AI for cephalometric tracing and aligner planning. Endodontics is applying AI to CBCT analysis and canal detection. Prosthodontics is leveraging AI for digital design and implant planning. Oral pathology is integrating AI for cellular-level diagnostics, with platforms such as Proteocyte advancing early cancer detection. Surgical and endodontic practices are beginning to explore AI-driven robotics and navigation systems, which aim to improve precision, reduce human error, and support minimally invasive approaches. Although still evolving, robotic assistance represents one of the most promising frontiers of dental AI.

Recent reviews confirm that this scope is broadening further, with applications emerging in pediatric and general dentistry for early caries detection, growth and development assessment, and preventive planning.6 As these specialty-specific innovations expand, AI is becoming a unifying infrastructure across the profession, driving consistency, enhancing efficiency, and improving patient outcomes across all domains of care.

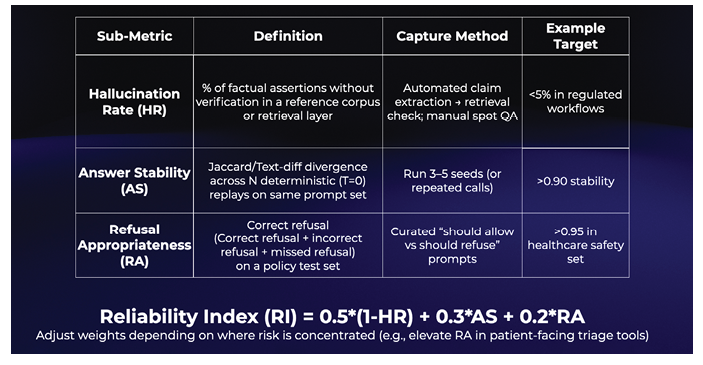

Table 3: A proposed operational framework for reliability in dental AI

Measuring reliability in dental AI

As AI tools enter regulated healthcare environments, reliability becomes just as important as capability. To stimulate discussion, we propose a conceptual framework for thinking about reliability in dentistry. This framework is not intended as a standard but as an illustration of how performance could eventually be measured once validated by empirical research.

Hallucination Rate (HR)

This refers to how often an AI system generates information that is factually incorrect or unsupported by evidence. In dentistry, an AI that fabricates periodontal findings or misidentifies radiographic features could mislead clinicians if unchecked.

Answer Stability (AS)

This measures how consistently the AI responds when asked the same question multiple times. For example, a documentation assistant that produces different chart notes under the same conditions would raise concerns about reliability.

Refusal Appropriateness (RA)

This assesses whether the AI refuses to answer when it should, such as declining to provide a diagnosis without clinical data, while still offering appropriate responses when safe to do so. In dentistry, this protects patients from unsafe or misleading advice.

To illustrate how these factors might be combined, one possible approach is a Reliability Index (RI), expressed as:

RI = 0.5 × (1 – HR) + 0.3 × AS + 0.2 × RA

This weighting prioritizes reducing hallucinations, with extra emphasis on stability and refusal appropriateness. The values and thresholds are only placeholders. They need thorough empirical validation and may differ depending on the context. For example, in patient-facing applications, refusal appropriateness might be weighted more heavily to enhance safety.

This framework serves as a thought experiment and initial discussion point. Future research will be necessary to validate the measures, adjust the weightings, and define clear thresholds for various clinical uses. Creating and confirming such reliability standards will be crucial, highlighting the broader research agenda that dentistry must now follow.

Future research needs

Despite rapid progress, essential questions still need answers. Future research must clarify how AI influences clinician performance over time, especially the risk of deskilling when core interpretive skills are delegated to machines. Studies are also necessary to determine how liability should be assigned when AI-assisted decisions lead to poor outcomes, and how insurance frameworks can evolve to meet these new challenges.

Equally important is research into health equity. Without deliberate strategies, AI may widen gaps between large, well-resourced organizations and smaller, independent practices. Understanding how adoption can be supported across different practice models will be essential to ensure that all patients benefit from advances in dental AI.

Finally, the reliability and safety of AI applications must continue to be empirically validated, with transparent benchmarks that enable clinicians to assess tools before integrating them into clinical workflows. Developing this evidence base will give practitioners and regulators the confidence to adopt AI responsibly, balancing innovation with professional accountability.

Conclusion

Artificial intelligence is no longer a distant reality in dentistry. It is already changing documentation, diagnostics, patient communication, and specialized care. Frontier and near-frontier models form the basis, while dental-specific applications turn their abilities into practical tools that save time, support decision-making, and improve clinical accuracy.

The greatest opportunity is not just in what AI can do independently, but in how it can enhance the expertise of dental professionals. Clinicians who validate AI outputs, protect patient data, and continue refining their interpretive skills will gain the most from its integration.

The future of dental AI will be shaped by collaboration. Those who adopt responsibly will provide more consistent, efficient, and patient-focused care while maintaining that the art and science of dentistry stay firmly in human hands.

Monday morning takeaways putting dental AI into practice

Monday morning takeaways putting dental AI into practice

1. Validate before trusting.

Always double-check AI-generated radiographic findings or chart notes. Think of AI as a second set of eyes, not a replacement for your own.

2. Protect patient data.

Never paste confidential charts into open-access platforms. Use HIPAA/PHIPA-secure tools where possible, and verify where your data is stored.

3. Watch for creeping deskilling.

Continue practicing manual charting and radiographic interpretation. Skills fade if they’re not exercised.

4. Plan for costs and training.

Budget realistically for both the subscription fee and staff training time. Early adopters succeed when they account for integration, not just the purchase price.

5. Equity matters.

If you operate a smaller practice, consider shared services, consortium discounts, or academic partnerships to access AI tools that might otherwise be too expensive.

Oral Health welcomes this original article.

Disclosure statement: The authors have tested several of the AI tools referenced in this article in clinical and educational settings. They have no financial interests in the companies named, and product mentions are provided solely as illustrative examples, not endorsements.

Artificial intelligence disclosure: Generative AI tools were used to support the writing and editing of this article. Specifically, the authors used OpenAI ChatGPT (GPT-5 accessed Aug–Sep 2025) and Anthropic Claude Opus to brainstorm headings, refine for clarity, and draft suggested transitions and section rewrites on request, propose figure/table captions, and normalize reference formatting. All content was reviewed, edited, and verified by the authors, who accept full responsibility for the final manuscript.

References

- Sasseville M, Yousefi F, Ouellet S, Naye F, Stefan T, Carnovale V, et al. The impact of AI scribes on streamlining clinical documentation: A systematic review. Healthcare. 2025;13(12):1447. https://doi.org/10.3390/healthcare13121447

- Leung TI, Coristine AJ, Benis A. AI scribes in health care: Balancing transformative potential with responsible integration. JMIR Med Inform. 2025;13:e80898. https://doi.org/10.2196/80898

- Tyagi M, Jain S, Ranjan M, Hassan S, Prakash N, Kumar D, et al. Artificial intelligence tools in dentistry: a systematic review on their application and outcomes. Cureus. 2025 May 29;17(5):e85062. https://doi.org/10.7759/cureus.85062

- Budzyń K, Romańczyk M, Kitala D, Kołodziej P, Bugajski M, Adami HO, et al. Endoscopist deskilling risk after exposure to artificial intelligence in colonoscopy: a multicentre, observational study. Lancet Gastroenterol Hepatol. 2025 Aug 12. https://doi.org/10.1016/S2468-1253(25)00133-5

- Royal College of Dental Surgeons of Ontario. Guidance: artificial intelligence in dentistry [Consultation draft; Internet]. Toronto ON: RCDSO; 2025 [cited 2025 Sep 1]. Available from: https://cdn.agilitycms.com/rcdso/pdf/consultations/Draft%20Guidance%20-%20Artificial%20Intelligence%20in%20Dentistry.pdf

- Mallineni SK, Bhumireddy J, Nuvvula S, Pawar AM. Artificial intelligence in dentistry: A descriptive review. Bioengineering. 2024;11(12):1267. https://doi.org/10.3390/bioengineering11121267

- Lal A, Nooruddin A, Umer F. Concerns regarding deployment of AI based applications in dentistry: a review. BDJ Open. 2025;11:27. https://doi.org/10.1038/s41405-025-00319-7

About the authors

Carly Zanatta earned her MSc in Applied Health Sciences from Brock University, where her research examined the role of diet in healing following sanative therapy. She is currently the Project Coordinator at Dr. Fritz’s Periodontal Wellness and Implant Surgery in Fonthill, where she oversees continuing education initiatives and clinical innovation projects.

Meghan Demetraki Paleolog is pursuing her Doctor of Dental Surgery (DDS) as a third-year student at the University of Toronto. This past summer, Meghan participated in the AI-Enhanced Periodontal Residency at Dr. Peter C. Fritz’s clinic in Fonthill, Ontario.

Fernanda Rosenboom is a second-year dental student at University College Cork, where she is completing her Bachelor of Dental Surgery (BDS). She also holds a Bachelor of Science in Medical Sciences from Brock University. For the past three years, Fernanda has worked to test and integrate AI technology at Dr. Peter’s Periodontal Wellness & Implant Surgery Clinic in Fonthill, Ontario.

Dr. Fritz is a pioneering periodontist and implant surgeon, combining clinical excellence with a deep understanding of the legal and ethical implications of emerging AI technologies in dentistry. Dr. Fritz’s interdisciplinary research focuses on innovative approaches to improving compassionate patient care. His clinic in Fonthill, Ontario, is recognized for setting new standards in patient care through the use of AI-driven applications. Dr. Fritz is committed to advancing dental science with a focus on innovation, ethics, and exploration at drfritz.ai.